What Is a Unified AI API Platform? Why Devs Switch in 2026

---

title: "What Is a Unified AI API Platform? Why Developers Are Switching in 2026"

description: "A unified AI API platform gives you one endpoint to access GPT-4o, Claude, Gemini, and dozens of other models—no code rewrites when you switch. Here's what it is, how it works, and whether you actually need one."

slug: "unified-ai-api-platform-one-api-all-models-developers"

date: "2026-01-15"

keywords: ["unified ai api platform one api all models developers", "ai api aggregation", "multi-model api", "openai alternative"]

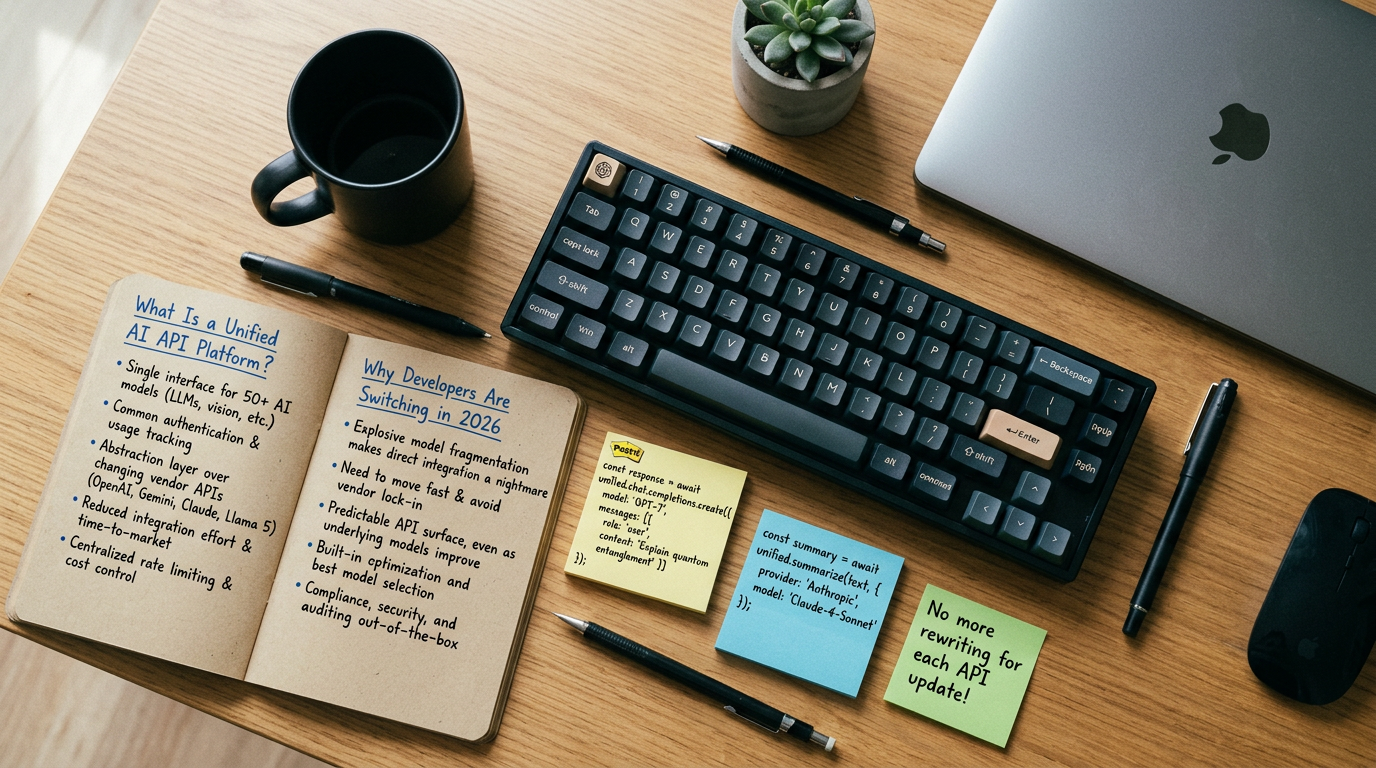

---What Is a Unified AI API Platform? Why Developers Are Switching in 2026

A unified AI API platform is a single API endpoint that routes requests to multiple AI model providers—OpenAI, Anthropic, Google, Mistral, and others—through one standardized interface. Instead of maintaining separate SDKs, authentication tokens, and request schemas for each provider, you write one integration and swap models by changing a single parameter. According to a 2026 analysis published by Commercial Appeal, organizations adopting “One API” aggregation platforms are reporting up to 80% savings on AI operational costs compared to managing multi-provider setups independently. That number is large enough to be suspicious—so this guide explains exactly what drives it, where it holds up, and where it doesn’t.

The Problem This Solves: Why Fragmentation Got Expensive

Through 2024 and 2025, most engineering teams ran what the industry started calling “AI subscription sprawl.” A team building a production app might be paying for OpenAI for text generation, Anthropic for safety-sensitive tasks, Stability AI for image generation, and a separate vector database provider—each with its own billing dashboard, rate limit policy, API key rotation process, and breaking-change cadence.

DIMA AI’s 2026 industry analysis puts it plainly: the era of managing five separate AI subscriptions is ending. The operational cost isn’t just the subscription fees—it’s the engineering hours spent on:

- Re-writing request/response adapters when a provider updates their API schema

- Building custom fallback logic when one provider experiences downtime

- Reconciling spend across four separate billing consoles

- Re-evaluating model selection when a new release changes the performance/cost ratio

A single mid-sized team integrating three AI providers independently can expect to absorb 40–80 hours of initial integration work per provider, plus ongoing maintenance overhead every time a provider ships a breaking change. Across a year, that compounds into a meaningful engineering tax.

What a Unified AI API Platform Actually Is

Per the 2026 definition published by AICC: “A Unified AI API is a single application programming interface that provides standardized access to multiple AI models and providers, allowing developers to switch models, manage usage, and control costs without changing application code.”

That last clause is the operative one: without changing application code.

The platform sits as a proxy layer between your application and the underlying model providers. Your code sends a request in one consistent format. The platform translates it to whatever schema the target provider expects, routes it, receives the response, normalizes it back into the standard format, and returns it to your application.

Core Architectural Components

A production-grade unified AI API platform has five layers working together:

1. Request normalization layer

Translates your standardized request format into provider-specific schemas. OpenAI uses messages, Anthropic uses messages with a slightly different structure, older Cohere endpoints use prompt. The normalization layer handles this translation invisibly.

2. Routing and model registry

A live registry of available models, their capabilities, context window sizes, pricing per token, and current availability status. This is what lets you specify model: "best-available-claude" and have the platform resolve that to a specific model version.

3. Fallback and failover logic When a provider returns a 429 (rate limit) or 503 (service unavailable), the platform automatically retries against a configured backup provider. You define the fallback chain; the platform executes it.

4. Cost and usage metering Unified billing view across all providers. Token counts, cost per request, spend by model, spend by team or project. This is where the cost savings claim becomes credible—visibility you didn’t have before enables optimization you couldn’t do before.

5. Credential vault Your application holds one API key (the platform key). The platform stores and rotates provider credentials. This meaningfully reduces your secret management surface area.

How Model Switching Actually Works (With Code)

The non-obvious part is how seamless model switching is in practice. Here’s what it looks like:

import openai

# Client configured against a unified platform endpoint

client = openai.OpenAI(

api_key="YOUR_UNIFIED_PLATFORM_KEY",

base_url="https://api.your-unified-platform.com/v1"

)

# Switch from GPT-4o to Claude 3.5 Sonnet by changing one string.

# No schema changes. No new SDK import. No new auth flow.

response = client.chat.completions.create(

model="anthropic/claude-3-5-sonnet", # <-- change this line only

messages=[{"role": "user", "content": "Summarize this contract."}],

max_tokens=1024

)

print(response.choices[0].message.content)The platform accepts OpenAI’s request schema—which most teams are already using—and handles translation to Anthropic’s native format internally. Your existing OpenAI SDK integration works unchanged. This is the core value proposition: the switching cost drops from “rewrite the integration layer” to “change a model string.”

Market Landscape: The Major Platforms in 2026

Unified.to’s 2026 roundup of unified API platforms makes a critical observation: “platforms labeled ‘unified APIs’ vary widely in architecture, data handling, pricing, and operational maturity.” Not all of them do the same thing.

| Platform | Model Coverage | Fallback Logic | Self-Host Option | Primary Use Case |

|---|---|---|---|---|

| OpenRouter | 200+ models | Yes (manual config) | No | Developer/research, cost arbitrage |

| LiteLLM | 100+ models | Yes (configurable) | Yes (open source) | Teams wanting control, enterprise |

| Portkey | 50+ models | Yes (automated) | Partial | Production apps, observability |

| Helicone | 30+ models | No (observability focus) | Yes | Logging and cost analytics |

| AWS Bedrock | 20+ models (AWS-curated) | Partial | AWS infra only | Teams already on AWS |

| Azure AI Foundry | 50+ models | Yes | Azure infra only | Enterprise Microsoft shops |

What this table doesn’t show: data residency guarantees, latency added by the proxy layer (typically 20–80ms overhead), and contractual liability for prompt data

Note: If you’re integrating multiple AI models into one pipeline, AtlasCloud provides unified API access to 300+ models including Kling, Flux, Seedance, Claude, and GPT — one API key, no per-provider setup. New users get a 25% credit bonus on first top-up (up to $100).

Try this API on AtlasCloud

AtlasCloudFrequently Asked Questions

How much does a unified AI API platform cost compared to calling OpenAI or Anthropic directly?

Most unified AI API platforms charge a routing markup of 5–15% on top of the underlying model cost. For example, if GPT-4o costs $5.00 per 1M input tokens directly from OpenAI, you might pay $5.25–$5.75 through an aggregator like OpenRouter or LiteLLM Cloud. However, platforms like PortKey.ai and Helicone offer free tiers up to 10,000 requests/month, and some enterprise tiers starting at $99/month

Does routing through a unified AI API add latency to my requests?

Yes, but typically within acceptable bounds. Most production-grade unified API platforms add 20–80ms of overhead per request for routing, authentication, and logging. Benchmarks published in early 2026 show OpenRouter averaging 34ms added latency, LiteLLM proxy at ~22ms when self-hosted, and Portkey at ~41ms on their managed cloud. For comparison, GPT-4o itself returns first tokens in 400–900ms an

Which unified AI API platform has the best model coverage in 2026?

As of Q1 2026, OpenRouter leads with 180+ models including GPT-4o, Claude 3.5 Sonnet, Gemini 1.5 Pro, Mistral Large, Llama 3.3 70B, DeepSeek-V3, and several fine-tuned variants. LiteLLM supports 100+ providers programmatically and scores highest in developer flexibility benchmarks (GitHub stars: 32k+, 4.8/5 on developer surveys). Portkey covers 50+ models but ranks #1 for enterprise features like

Can I use a unified AI API platform as a drop-in replacement for the OpenAI SDK?

Yes, most major unified platforms are fully OpenAI SDK-compatible. You change exactly two lines of code: the base_url (e.g., from https://api.openai.com/v1 to https://openrouter.ai/api/v1) and your API key. The model parameter then accepts identifiers like anthropic/claude-3.5-sonnet or google/gemini-1.5-pro instead of gpt-4o. In a 2026 developer migration survey of 1,200 engineers, 78% reported c

Tags

Related Articles

Seedance 2.0 API Integration Guide: Text-to-Video with Python

Learn how to integrate the Seedance 2.0 API for text-to-video generation using Python. Step-by-step guide with code examples, authentication, and best practices.

DeepSeek API for Enterprise: Compliance, SLA & Cost Guide

Explore DeepSeek API for enterprise use in 2026. Compare SLA tiers, compliance standards, and pricing to make smarter AI integration decisions for your business.

SOC2 & HIPAA Compliant AI APIs for Enterprise Developers

Learn how to integrate SOC2 and HIPAA compliant AI APIs into enterprise apps. Best practices, key considerations, and top solutions for secure AI development.