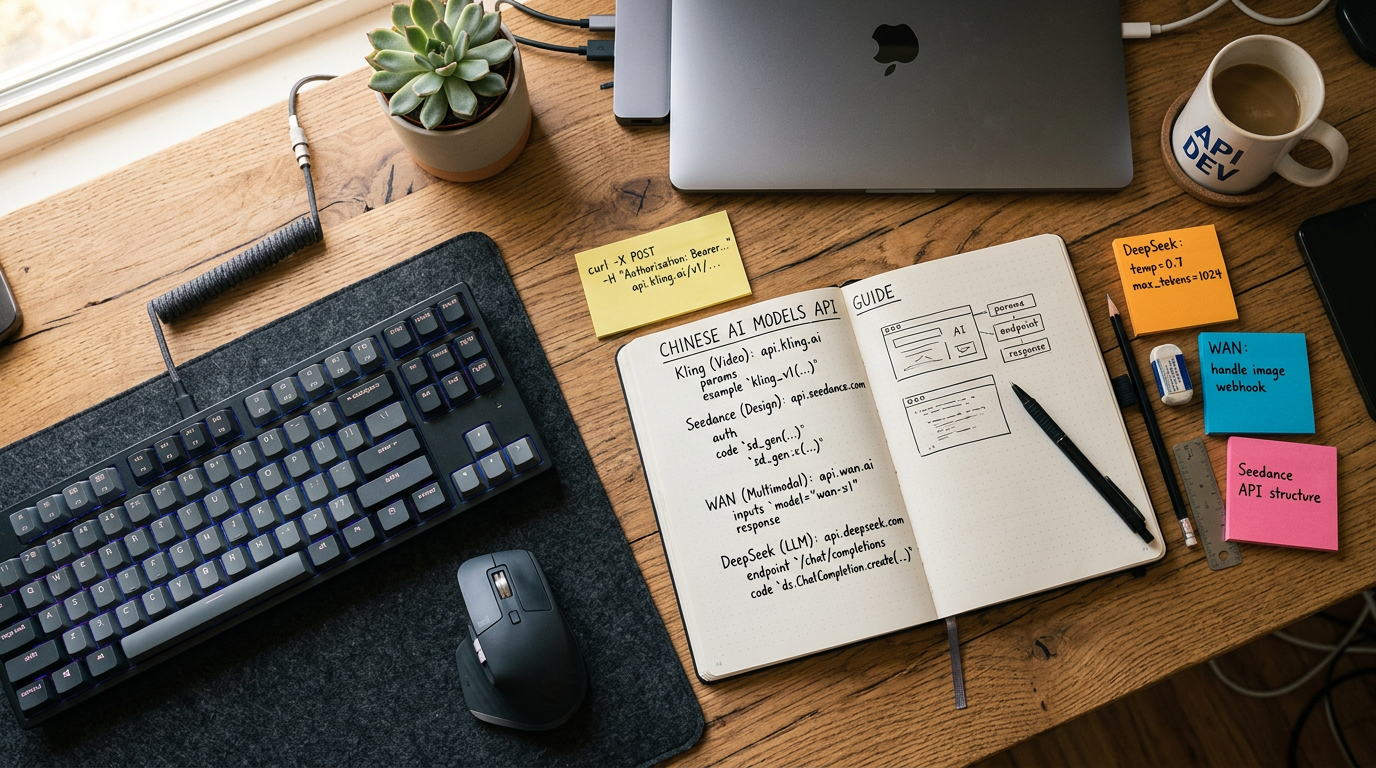

Chinese AI Models API Guide: Kling, Seedance & DeepSeek

Chinese AI Models API Guide: Kling, Seedance, WAN, DeepSeek on One Platform

You can access Kling, Seedance, WAN 2.1, and DeepSeek through a single unified API endpoint today — primarily via aggregator platforms like fal.ai, Replicate, and OpenRouter, which proxy requests to the underlying model providers. This eliminates the need to manage four separate authentication systems, rate limit policies, and response schemas. The practical implication: a single codebase can route video generation requests to Kling 1.6, text-to-video to Seedance 1.0, open-domain generation to WAN 2.1, and reasoning tasks to DeepSeek R1 — with model switching handled by a single parameter change.

Why This Matters: The Chinese AI API Landscape in 2025–2026

Chinese AI models have moved from “interesting alternatives” to production-grade tools that Western developers are actively integrating. The numbers justify the attention:

- Kling (by Kuaishou) generated over 6 million videos in its first month of public availability and has become one of the most benchmarked video generation models globally.

- DeepSeek R1 achieved performance competitive with OpenAI o1 on coding and math benchmarks at a fraction of the inference cost — its release in January 2025 caused measurable disruption to Nvidia’s market cap.

- Seedance 1.0 (ByteDance) and WAN 2.1 (Alibaba) represent two distinct architectural approaches to diffusion-based video generation, with WAN 2.1 being fully open-weight and Seedance remaining closed-source but API-accessible.

The challenge is fragmentation. Each model provider — Kuaishou, ByteDance, Alibaba, DeepSeek — operates its own API with different authentication schemes, request formats, pricing denominations, and content policies. For a developer building a product that needs video generation and LLM reasoning, maintaining four integrations is untenable at scale.

Unified access platforms solve this. They abstract the underlying provider APIs behind a single REST or OpenAI-compatible interface.

The Four Models: What They Actually Do

Before you route requests, you need to understand what each model is genuinely good at. Treating these as interchangeable wastes money and degrades output quality.

Kling 1.5 / 1.6 (Kuaishou)

Kling is a video generation model with strong temporal consistency — meaning objects and characters maintain coherent motion across frames. It supports text-to-video and image-to-video modes. Kling 1.6 Pro supports up to 10-second clips at 1080p, with a motion intensity parameter that controls how dynamic the output is. It’s the model to use when you need cinematic quality with controlled motion.

Key constraint: Kling has strict content moderation tied to Chinese regulatory requirements. The ChinaTalk analysis of the Chinese AI video ecosystem notes that China’s AI content labeling framework requires platforms to detect and watermark AI-generated content at multiple confidence levels. Kling embeds invisible watermarks by default — relevant if you’re building downstream detection pipelines.

Seedance 1.0 (ByteDance)

Seedance 1.0 is ByteDance’s video generation model, notable for its handling of fast motion and scene transitions. It performs well on requests involving dynamic camera movement (dolly, pan, zoom) and tends to produce more “social media native” aesthetics compared to Kling’s cinematic bias. Seedance is closed-source with no published weights.

Key constraint: Seedance’s API availability outside China runs primarily through third-party proxies. ByteDance does not offer a direct Western-market API endpoint with SLA guarantees as of early 2026. Latency through proxy platforms can vary significantly.

WAN 2.1 (Alibaba / Tongyi)

WAN 2.1 is the open-weight differentiator in this group. Alibaba released the weights publicly, which means you can self-host it, fine-tune it, or run it through any inference platform that supports the model format. It supports text-to-video and image-to-video generation and has strong multilingual prompt understanding — relevant for non-English video generation workflows. The open-weight nature also means no content policy enforcement at the model level (though platforms hosting it may add their own).

Key constraint: WAN 2.1 is computationally expensive to self-host. Running it at quality settings comparable to Kling requires A100-class hardware. The hosted API route on platforms like fal.ai makes more economic sense unless you have specific fine-tuning requirements.

DeepSeek R1 / V3

DeepSeek operates in a different category entirely — it’s an LLM, not a video model. DeepSeek R1 is a reasoning model trained with reinforcement learning, competitive with OpenAI o1 on AIME, MATH, and coding benchmarks. DeepSeek V3 is the general-purpose chat/completion model. Both are relevant in a unified platform context when your workflow combines video generation with prompt engineering, script generation, metadata creation, or code generation.

Key constraint: DeepSeek’s API has experienced significant rate limiting during high-demand periods. OpenRouter provides a more stable access layer with fallback routing.

Platform Comparison: Where to Unify Access

| Platform | Kling | Seedance | WAN 2.1 | DeepSeek | Auth Method | Pricing Model |

|---|---|---|---|---|---|---|

| fal.ai | ✅ 1.5, 1.6 | ✅ 1.0 | ✅ 2.1 | ❌ | API Key (single) | Per-second / per-image |

| Replicate | ✅ | ✅ | ✅ | ✅ (community) | API Key (single) | Per-prediction |

| OpenRouter | ❌ | ❌ | ❌ | ✅ V2.5, R1 | OpenAI-compatible | Per token |

| Together AI | ❌ | ❌ | ✅ | ✅ | API Key (single) | Per token / per second |

| Novita AI | ✅ | ✅ | ✅ | ✅ | API Key (single) | Per task |

Practical recommendation: For video-only workflows (Kling + Seedance + WAN), fal.ai has the most complete coverage and the most stable endpoints as of early 2026. For mixed video + LLM workflows, Novita AI or a combination of fal.ai + OpenRouter covers the full stack.

Cost and Performance Analysis

These figures reflect approximate costs from platform documentation and community benchmarks. Video generation costs are highly dependent on resolution, duration, and quality tier selected.

| Model | Task | Approx. Cost (via platform) | Latency (typical) | Quality Tier |

|---|---|---|---|---|

| Kling 1.6 Pro | 5s video, 1080p, image-to-video | $0.28–$0.35/clip | 90–180s | Production |

| Kling 1.5 Standard | 5s video, 720p, text-to-video | $0.14–$0.18/clip | 60–120s | Mid-tier |

| Seedance 1.0 | 5s video, 1080p | $0.20–$0.30/clip | 80–160s | Production |

| WAN 2.1 (hosted) | 5s video, 720p | $0.10–$0.16/clip | 120–240s | Mid-tier |

| WAN 2.1 (self-hosted, A100) | 5s video, 720p | ~$0.05–$0.08/clip | 90–150s | Mid-tier |

| DeepSeek R1 | 1K tokens in / 1K out | ~$0.0014/$0.0055 | 2–8s | Reasoning |

| DeepSeek V3 | 1K tokens in / 1K out | ~$0.00028/$0.0011 | 1–4s | General |

Cost note: DeepSeek V3 is remarkably cheap for LLM inference — roughly 30x cheaper than GPT-4o at equivalent task quality for coding and structured output tasks. This makes it viable for high-volume prompt generation pipelines feeding video models.

Implementation: Unified Routing Pattern

The non-obvious implementation challenge isn’t authentication — it’s response schema normalization. Kling returns a job ID that requires polling. Seedance via fal.ai returns a webhook or polling URL. WAN 2.1 on Replicate uses a prediction object. If you’re building a product, you need a thin abstraction layer.

Here’s a practical routing pattern that handles the async nature of video generation across providers:

import httpx

import asyncio

from enum import Enum

class VideoModel(Enum):

KLING_16_PRO = "fal-ai/kling-video/v1.6/pro/image-to-video"

KLING_15_STD = "fal-ai/kling-video/v1.5/standard/text-to-video"

SEEDANCE_10 = "fal-ai/seedance-video-lite"

WAN_21 = "fal-ai/wan/t2v-1.3b"

async def generate_video(

model: VideoModel,

prompt: str,

api_key: str,

image_url: str = None,

duration: int = 5

) -> dict:

"""

Unified video generation via fal.ai queue.

All models return the same normalized response structure.

"""

headers = {"Authorization": f"Key {api_key}", "Content-Type": "application/json"}

payload = {"prompt": prompt, "duration": duration}

if image_url and "image-to-video" in model.value:

payload["image_url"] = image_url

# Step 1: Submit job

async with httpx.AsyncClient(timeout=30) as client:

submit = await client.post(

f"https://queue.fal.run/{model.value}",

headers=headers,

json=payload

)

submit.raise_for_status()

request_id = submit.json()["request_id"]

# Step 2: Poll for completion (fal.ai queue pattern)

poll_url = f"https://queue.fal.run/{model.value}/requests/{request_id}/status"

async with httpx.AsyncClient(timeout=10) as client:

for attempt in range(60): # max 5 minutes

status_resp = await client.get(poll_url, headers=headers)

status = status_resp.json()

if status.get("status") == "COMPLETED":

result = await client.get(

f"https://queue.fal.run/{model.value}/requests/{request_id}",

headers=headers

)

# Normalize: all models return video.url in fal response

return {

"model": model.name,

"video_url": result.json()["video"]["url"],

"request_id": request_id

}

if status.get("status") == "FAILED":

raise RuntimeError(f"Generation failed: {status.get('error')}")

await asyncio.sleep(5)

raise TimeoutError(f"Generation timed out after 5 minutes for {model.name}")The key architectural point here: fal.ai’s queue endpoint is identical across all video models. The model path changes, but the submit → poll → fetch pattern is consistent. This is the abstraction you’d lose if you integrated directly with Kuaishou’s API (which uses a different async pattern) or ByteDance’s endpoint. The platform does the normalization work.

For DeepSeek, OpenRouter exposes a fully OpenAI-compatible endpoint — you swap the base_url and model parameters in any existing OpenAI SDK integration. No new pattern required.

Selecting the Right Model for Your Use Case

| Use Case | Recommended Model | Why |

|---|---|---|

| Cinematic product videos, controlled motion | Kling 1.6 Pro | Best temporal consistency, highest resolution |

| Social content, fast motion, trendy aesthetics | Seedance 1.0 | Optimized for dynamic, short-form content |

| Multilingual prompt workflows, custom fine-tuning | WAN 2.1 | Open weights, strong non-English understanding |

| High-volume video with cost constraints | WAN 2.1 (self-hosted) | Lowest per-clip cost at scale |

| Code generation, math, structured reasoning | DeepSeek R1 | Competitive with o1, dramatically cheaper |

| Prompt generation pipeline for video models | DeepSeek V3 | Cheapest LLM option for bulk prompt tasks |

| Mixed: script → video pipeline | DeepSeek V3 + Kling 1.6 | Generate optimized prompts, then render |

Common Pitfalls and Misconceptions

1. Assuming content policies are identical across models

They are not. Kling and Seedance enforce Chinese regulatory content standards, which include restrictions that differ materially from Western platform policies. WAN 2.1 (self-hosted) applies no model-level content filtering. If you’re routing user-generated prompts through a unified API, you need your own content moderation layer — you cannot rely on model-side enforcement to be consistent.

2. Using DeepSeek R1 for everything because it’s cheap

DeepSeek R1 is a reasoning model — it uses chain-of-thought processing and is slower and more expensive than DeepSeek V3 for simple tasks. Using R1 for basic prompt formatting or JSON extraction is like using a sledgehammer for a thumbtack. V3 is the right default for most LLM tasks; R1 is for complex multi-step reasoning.

3. Ignoring async latency in production UX

Video generation across all four models is asynchronous with latency measured in minutes, not seconds. A Kling 1.6 Pro clip typically takes 90–180 seconds. Building a synchronous request-response UX around this will result in timeout errors and poor user experience. Design for async from the start: job submission → status polling or webhooks → delivery.

4. Treating unified platform pricing as identical to direct API pricing

Aggregator platforms charge a markup over direct provider costs. fal.ai and Replicate markups are typically 15–40% over base model inference costs. For low-volume prototyping, this is irrelevant. For production workloads exceeding ~$500/month, evaluating direct API access from the model provider is worth the integration overhead.

5. Assuming WAN 2.1 self-hosting is straightforward

The open-weight availability is genuine, but the operational requirements are not trivial. WAN 2.1 requires significant VRAM (48GB+ for full quality), and the inference stack requires careful dependency management. Factor in engineering time and infrastructure costs before concluding that self-hosting beats the hosted API economically.

6. Overlooking regulatory and data residency implications

All four models are developed by Chinese companies. Depending on your jurisdiction and use case, this may have data residency, IP ownership, or compliance implications. This is not a reason to avoid these models, but it is a due diligence item — particularly for enterprise contracts or regulated industries.

When NOT to Use a Unified Platform

Unified access platforms add value for prototyping and moderate-scale production. There are cases where they add friction:

- High-volume production (>10K videos/month): Direct API contracts with model providers offer better pricing, SLA terms, and rate limit headroom.

- Fine-tuning requirements: You cannot fine-tune Kling or Seedance through aggregator platforms. WAN 2.1 fine-tuning requires direct weight access.

- Latency-sensitive applications: Platform proxy adds 100–500ms to every request. For LLM inference (DeepSeek), this is negligible. For video generation (already 90s+), it’s irrelevant. But it matters if you’re building a real-time API comparison tool.

- Specific model versions: Platforms don’t always expose the latest model versions immediately. If you need Kling 2.0 the day it launches, direct integration is required.

Conclusion

Kling, Seedance, WAN 2.1, and DeepSeek are production-ready models accessible through unified platforms today — the engineering question is routing strategy, not availability. Use fal.ai for video model unification, add OpenRouter or Novita for DeepSeek access, and build your integration around the async queue pattern rather than synchronous calls. The cost and quality differences between models are real and task-specific, so model selection logic belongs in your application layer, not as a hardcoded default.

Note: If you’re integrating multiple AI models into one pipeline, AtlasCloud provides unified API access to 300+ models including Kling, Flux, Seedance, Claude, and GPT — one API key, no per-provider setup. New users get a 25% credit bonus on first top-up (up to $100).

Try this API on AtlasCloud

AtlasCloudFrequently Asked Questions

How much does it cost to generate a video with Kling API vs Seedance API in 2025?

On aggregator platforms like fal.ai, Kling 1.6 video generation typically costs around $0.045–$0.08 per second of generated video (e.g., a 5-second 1080p clip runs approximately $0.25–$0.40). Seedance 1.0 is priced competitively at roughly $0.03–$0.05 per second of output. WAN 2.1 via Replicate is billed at approximately $0.000725 per second of compute time, making it one of the cheapest open-doma

What is the typical API latency for Kling and DeepSeek R1 when called through fal.ai or OpenRouter?

Kling 1.6 video generation has an average end-to-end latency of 60–120 seconds for a 5-second 720p clip due to the compute-intensive nature of video diffusion models — not suitable for real-time applications. Seedance 1.0 benchmarks slightly faster at 45–90 seconds for equivalent output. WAN 2.1 averages 80–150 seconds depending on resolution. DeepSeek R1 text inference is much faster: time-to-fir

How do I switch between Kling, WAN 2.1, and DeepSeek models using a single API codebase?

On unified platforms like fal.ai, model switching is handled by changing the model ID string in a single parameter. For example: fal.run('fal-ai/kling-video/v1.6/standard/text-to-video') for Kling 1.6, fal.run('fal-ai/wan/v2.1/1.3b/text-to-video') for WAN 2.1, and the OpenRouter endpoint uses model: 'deepseek/deepseek-r1' with a standard OpenAI-compatible POST to https://openrouter.ai/api/v1/chat/

How does DeepSeek R1 benchmark against GPT-4o and Claude 3.5 Sonnet for coding and reasoning tasks?

DeepSeek R1 scores 97.3% on MATH-500 (vs GPT-4o at 76.6% and Claude 3.5 Sonnet at 78.3%), demonstrating strong mathematical reasoning. On HumanEval coding benchmarks, R1 achieves 92.9% pass@1 compared to GPT-4o's 90.2% and Claude 3.5 Sonnet's 92.0% — effectively competitive at a fraction of the cost ($0.55/$2.19 per 1M input/output tokens vs GPT-4o's $5/$15). On MMLU, R1 scores 90.8% vs GPT-4o's 8

Tags

Related Articles

Seedance 2.0 API Integration Guide: Text-to-Video with Python

Learn how to integrate the Seedance 2.0 API for text-to-video generation using Python. Step-by-step guide with code examples, authentication, and best practices.

DeepSeek API for Enterprise: Compliance, SLA & Cost Guide

Explore DeepSeek API for enterprise use in 2026. Compare SLA tiers, compliance standards, and pricing to make smarter AI integration decisions for your business.

Cut AI API Costs by 60% With Batching, Caching & Model Tips

Learn how to reduce AI API costs by 60% using smart batching, response caching, and strategic model selection. Practical tips to optimize your AI spending today.